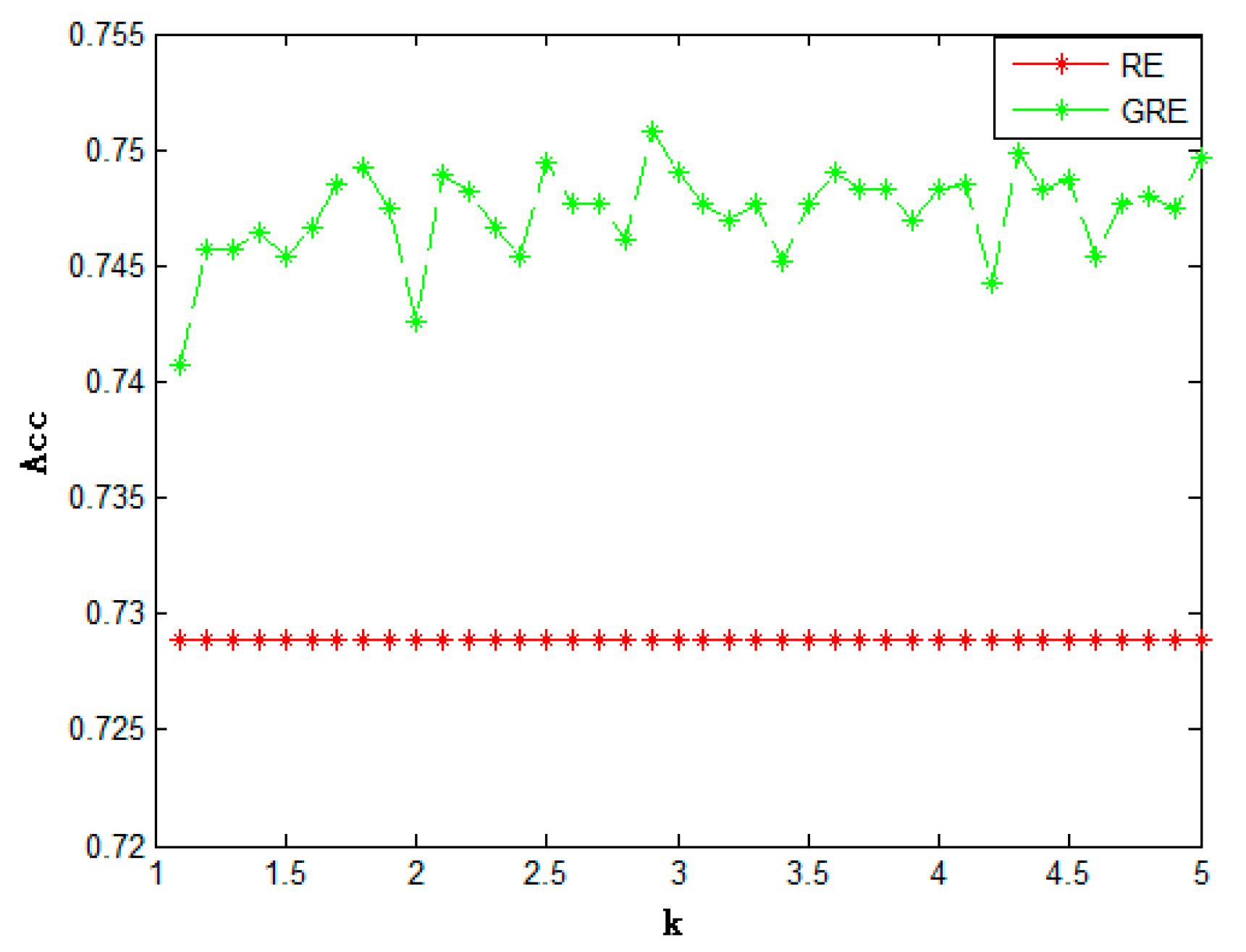

Negating this inequality produces the desired result, QED. i.I wish to prove that relative entropy(Kullback-Liebler divergence) is always non-negative. Let us say we are building a deep neural network that classifies dogs and cats, for a dog picture - The probability of classifying a dog as dog by a perfect neural network is 1. entropy enzyme equilibrium equinox equipartition of energy escape velocity Euler relationship. Some of very well known probability density distribution plots I The relative entropy and mutual information concepts can be extended to the continuous case in a straightforward manner, and convey the same information Entropy and Mutual Information 2/24. KL-Divergence is a measure of how two distributions differ from each others. It is a measure of the dissimilarity between two random quantities, in particular between two probability measures, as the KolmogorovSmirnov distance 53. In the proposed algorithm, we employ relative entropy (KullbackLeibler divergence) to measure the correlation degree between two different nodes 34, its formula is shown as Eq. For nodes v i and v j, P v i and P v j are their communication probability sequences. Entropy maximization (Ma圎nt) is a general approach of inferring a probability distribution from constraints which do not uniquely characterize that distribution. How are KL-Divergence and Log-Likelihood related? Correlation matrix based on relative entropy. A detailed discussion of information-based methods is given in Ulrych & Sacchi (2006), specifically Bayesian inference, maximum entropy and minimum relative entropy (MRE).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed